2-Kubernetes入门之CentOS安装部署集群

作者:快盘下载 人气:77[TOC]

0x00 前言简述

描述: 通过上一篇K8s入门体系架构学习我们初步的了解单节点的master与worker的工作部署流程,但是前面所用的是kuboard所提供的安装脚本作为测试练手安装还是可以将就的,但是在实际的生产的环境中由于业务的复杂性和多样性需要依靠集群来保证其安全可靠性;

安装K8s前我们需要从集群规划的以下几方面入手准备:

(1) 操作系统 (OS) 描述:在使用 CentOS 7x 系的 OS 时建议升级一下内核版本(stable >= 4.19),不然在运行一些 java 容器的时候可能会遇到一些问题。前期可以在测试环境部署一些 Java 应用业务,观察是否会遇到此类问题,如果遇到此类问题可尝试通过升级内核版本来解决。Q:Java容器瞬间拉起的过程,整个集群都会被CPU用尽,如何解决Java CPU启动时候CPU资源互争的情况? A:这个问题我们也遇到过,后来把内核升级到4.19后就不再发生了很多内存耗尽,CPU爆炸的问题我们都通过内核升级解决了。

PS : 注意在Linux Kernel 3.10.x 内核存在一些 Bugs,导致运行的 docker、Kubernetes 不稳定 PS : 前面我们说过对于node工作负载的节点尽可能选择物理机器,而Master节点为了便于恢复建议安装在vSphere虚拟化环境之中;

(2) 稳定版本选择 (VERSION)目前截至本文档汇总时(2020-06-20),Kubernetes 官方还在维护的 release stable 版本有 1.16.x、 1.17.x 、1.18.x 。1.14.x 和 1.15.x 版本的生命周期都已经接近 EOL ,因此不建议选择较旧版本。综合考虑,目前来讲选择 4 < x < 10 小版本中的 1.17.4 或 1.17.5 版本最为合适以及1.18.3。

对于 docker-ce 版本,默认使用官方 yum 源中的最新版本即可,即 docker-ce-19.03.9-3.el7。对于 harbor 版本,考虑到 harbor v2.0.0-rc1 刚刚 release ,但不建议选择使用,建议选择 v1.9.4 版本,后续如果有遇到问题必须通过升级的方式解决,可以考虑升级到已经 release stable 版本的 v2.x.x 。pkg | version | release date |

|---|---|---|

kubernetes | v1.17.5 | 2020-04-16 |

docker-ce | 19.03.9 | 2020-04-12 |

harbor | v1.9.4 | 2020-12-31 |

0x01 安装部署

描述:我们需要自定义安装所需组件和插件所以我们下面进行利用 kubeadm 进行手动部署K8S(单机|集群);

0.基础安装环境

描述:在进行kubeadm安装的时候,不论是worker节点或者master节点都需要进行执行; 系统环境建议:

系统版本

#OS CentOS 7.x/8.x (推荐此处环境7.8),Ubuntu(18.04) #Kerner OS KERNER >= 4.18 #Docker Version: 19.03.09 #kubernetes 1.18.3

环境检查

#1.当前节点 hostnamectl set-hostname master-01 hostnamectl status #2.kubeadm会检查当前主机是否禁用了`swap`,所以这里临时关闭swap()和SELinux # 临时关闭swap和SELinux swapoff -a setenforce 0 # 永久关闭swap和SELinux yes | cp /etc/fstab /etc/fstab_bak cat /etc/fstab_bak |grep -v swap > /etc/fstab sed -i 's/^SELINUX=.*$/SELINUX=disabled/' /etc/selinux/config #3.主机名设置 echo "127.0.0.1 $(hostname)" >> /etc/hosts cat <<EOF >> /etc/hosts 10.80.172.211 master-01 EOF #4.关闭防火墙 systemctl stop firewalld systemctl disable firewalld

系统内核参数调整:

# /etc/sysctl.conf 进行内核参数的配置 # /etc/sysctl.d/99-kubernetes-cri.conf egrep -q "^(#)?net.ipv4.ip_forward.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv4.ip_forward.*|net.ipv4.ip_forward = 1|g" /etc/sysctl.conf || echo "net.ipv4.ip_forward = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.bridge.bridge-nf-call-ip6tables.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.bridge.bridge-nf-call-ip6tables.*|net.bridge.bridge-nf-call-ip6tables = 1|g" /etc/sysctl.conf || echo "net.bridge.bridge-nf-call-ip6tables = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.bridge.bridge-nf-call-iptables.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.bridge.bridge-nf-call-iptables.*|net.bridge.bridge-nf-call-iptables = 1|g" /etc/sysctl.conf || echo "net.bridge.bridge-nf-call-iptables = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.all.disable_ipv6.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.all.disable_ipv6.*|net.ipv6.conf.all.disable_ipv6 = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.all.disable_ipv6 = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.default.disable_ipv6.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.default.disable_ipv6.*|net.ipv6.conf.default.disable_ipv6 = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.default.disable_ipv6 = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.lo.disable_ipv6.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.lo.disable_ipv6.*|net.ipv6.conf.lo.disable_ipv6 = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.lo.disable_ipv6 = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.all.forwarding.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.all.forwarding.*|net.ipv6.conf.all.forwarding = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.all.forwarding = 1" >> /etc/sysctl.conf # 执行命令以应用 sysctl -p

1.Docker 安装配置

描述:主要就是下载指定的Docker-ce版本以及docker-compose的下载配置,注意在 master 节点和 worker 节点都要执行;

# 适用于:CentOS # Docker hub 镜像加速源:在 master 节点和 worker 节点都要执行 # 最后一个参数 1.18.2 用于指定 kubenetes 版本,支持所有 1.18.x 版本的安装 # 腾讯云 docker hub 镜像 # export REGISTRY_MIRROR="https://mirror.ccs.tencentyun.com" # DaoCloud 镜像 # export REGISTRY_MIRROR="http://f1361db2.m.daocloud.io" # 阿里云 docker hub 镜像 export REGISTRY_MIRROR= #https://registry.cn-hangzhou.aliyuncs.com # 安装 docker # 参考文档如下 # https://docs.docker.com/install/linux/docker-ce/centos/ # https://docs.docker.com/install/linux/linux-postinstall/ # 卸载旧版本 yum remove -y docker docker-client docker-client-latest docker-common docker-latest docker-latest-logrotate docker-logrotate docker-selinux docker-engine-selinux docker-engine # 安装基础依赖 yum install -y yum-utils lvm2 wget # 安装 nfs-utils 必须先安装 nfs-utils 才能挂载 nfs 网络存储 yum install -y nfs-utils # 添加 docker 镜像仓库 yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo # CentOS8 # dnf -y install https://download.docker.com/linux/centos/7/x86_64/stable/Packages/containerd.io-1.2.6-3.3.el7.x86_64.rpm # 安装 docker yum list docker-ce --showduplicates | sort -r read -p '请输入需要安装的Docker-ce的版本号(例如:19.03.9):' VERSION yum install -y docker-ce-${VERSION} docker-ce-cli-${VERSION} containerd.io # 安装 Docker-compose curl -L https://get.daocloud.io/docker/compose/releases/download/1.25.5/docker-compose-`uname -s`-`uname -m` > /usr/local/bin/docker-compose chmod +x /usr/local/bin/docker-compose # 镜像源加速配置 # curl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s ${REGISTRY_MIRROR} # curl -sSL https://kuboard.cn/install-script/set_mirror.sh | sh -s ${REGISTRY_MIRROR} # # General CentOS8 mkdir /etc/docker/ cat > /etc/docker/daemon.json <<EOF {"registry-mirrors": ["REPLACE"]} EOF sed -i "s#REPLACE#${REGISTRY_MIRROR}#g" /etc/docker/daemon.json # 启动docker并查看安装后的版本信息 systemctl enable docker systemctl start docker docker-compose -v docker info

2.K8s 基础环境

描述:以下是对于K8s基础环境的安装以及分别实现Master和Node节点初始化;

k8s 环境安装设置:

# kubneets 版本号 export K8SVERSION="1.18.3" # 卸载旧版本 yum remove -y kubelet kubeadm kubectl # 配置K8S的yum源 cat <<'EOF' > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF # 安装kubelet、kubeadm、kubectl # 将 ${1} 替换为 kubernetes 版本号,例如 1.18.3 yum list kubeadm --showduplicates|sort -r yum install -y kubeadm-${K8SVERSION} kubectl-${K8SVERSION} kubelet-${K8SVERSION} # 修改docker Cgroup Driver为systemd # # 将/usr/lib/systemd/system/docker.service文件中的这一行 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock # # 修改为 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --exec-opt native.cgroupdriver=systemd # 如果不修改在添加 worker 节点时可能会碰到如下错误 # [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". # Please follow the guide at https://kubernetes.io/docs/setup/cri/ sed -i "s#^ExecStart=/usr/bin/dockerd.*#ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --exec-opt native.cgroupdriver=systemd#g" /usr/lib/systemd/system/docker.service # 重启 docker,并启动 kubelet systemctl daemon-reload systemctl restart docker systemctl enable kubelet && systemctl start kubelet

3.master - 主控制节点配置

描述:关于初始化时用到的环境变量

APISERVER_NAME 不能是 master 的 hostnameAPISERVER_NAME 必须全为小写字母、数字、小数点,不能包含减号POD_SUBNET 所使用的网段不能与 master节点/worker节点 所在的网段重叠。该字段的取值为一个 CIDR 值,如果您对 CIDR 这个概念还不熟悉,请仍然执行 export POD_SUBNET=10.100.0.1/16 命令,不做修改# kubneets 版本号 export K8SVERSION="1.18.3" # 替换 x.x.x.x 为 master 节点的内网IP # export 命令只在当前 shell 会话中有效,开启新的 shell 窗口后,如果要继续安装过程,请重新执行此处的 export 命令 export MASTER_IP=${IPADDR} # 替换 apiserver.demo 为 您想要的 DNSName export APISERVER_NAME=apiserver.test # 阿里云 docker hub 镜像 export REGISTRY_MIRROR=https://registry.cn-hangzhou.aliyuncs.com # 只在 master 节点执行 # Kubernetes 容器组所在的网段,该网段安装完成后,由 kubernetes 创建,事先并不存在于您的物理网络中 export POD_SUBNET=10.100.0.1/16 echo "${MASTER_IP} ${APISERVER_NAME}" >> /etc/hosts if [ ${#POD_SUBNET} -eq 0 ] || [ ${#APISERVER_NAME} -eq 0 ]; then echo -e "33[31;1m请确保您已经设置了环境变量 POD_SUBNET 和 APISERVER_NAME 33[0m" echo 当前POD_SUBNET=$POD_SUBNET echo 当前APISERVER_NAME=$APISERVER_NAME exit 1 fi # 查看完整配置选项 https://godoc.org/k8s.io/kubernetes/cmd/kubeadm/app/apis/kubeadm/v1beta2 rm -f ./kubeadm-config.yaml cat <<EOF > ./kubeadm-config.yaml apiVersion: kubeadm.k8s.io/v1beta2 kind: ClusterConfiguration kubernetesVersion: v${K8SVERSION} imageRepository: registry.cn-hangzhou.aliyuncs.com/Google_containers controlPlaneEndpoint: "${APISERVER_NAME}:6443" networking: serviceSubnet: "10.99.0.0/16" podSubnet: "${POD_SUBNET}" dnsDomain: "cluster.local" EOF # kubeadm init # 根据您服务器网速的情况,您需要等候 3 - 10 分钟 kubeadm init --config=kubeadm-config.yaml --upload-certs # 配置 kubectl (重点如不配置将会导致kubectl无法执行) rm -rf /root/.kube/ mkdir /root/.kube/ cp -i /etc/kubernetes/admin.conf /root/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config # 安装 calico 网络插件 # 参考文档 https://docs.projectcalico.org/v3.13/getting-started/kubernetes/self-managed-onprem/onpremises echo -e "---安装calico-3.13.1---" rm -f calico-3.13.1.yaml wget https://kuboard.cn/install-script/calico/calico-3.13.1.yaml kubectl apply -f calico-3.13.1.yaml # 只在 master 节点执行 # 执行如下命令,等待 3-10 分钟,直到所有的容器组处于 Running 状态 watch kubectl get pod -n kube-system -o wide echo -e "---等待容器组构建完成---" && sleep 180 # 查看 master 节点初始化结果 kubectl get nodes -o wide

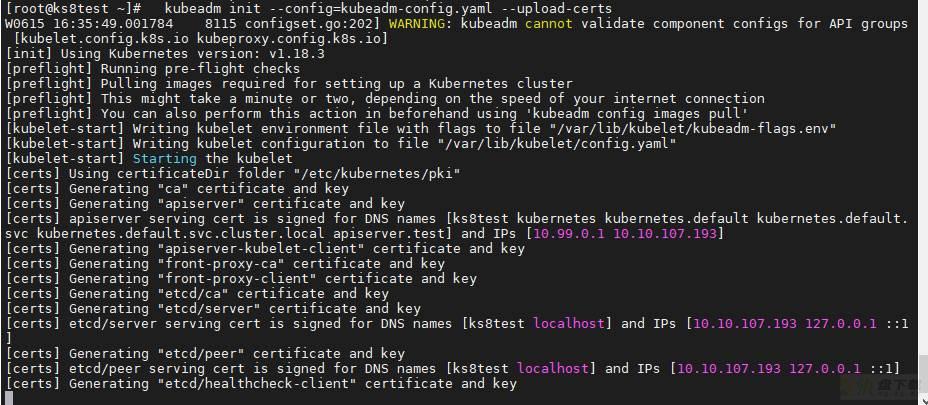

执行结果:

# 表示初始化安装kubernetes成功 Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: # 您现在可以加入任意数量的控制平面节点(集群),在每个节点上运行以下命令作为根: kubeadm join apiserver.test:6443 --token hzlzrr.uwuegx4locpu36oc --discovery-token-ca-cert-hash sha256:4cbe428cb3503277be9fbcf3a99de82a97397a624dd94d4270c4eed1b861f951 --control-plane --certificate-key 28b178f04afae3770aa92add0206650b2359dd61424f127a6d44142dd15a280d # 通过在每个工作节点上作为根运行以下操作来加入任意数量的工作节点: kubeadm join apiserver.test:6443 --token hzlzrr.uwuegx4locpu36oc --discovery-token-ca-cert-hash sha256:4cbe428cb3503277be9fbcf3a99de82a97397a624dd94d4270c4eed1b861f951

WeiyiGeek.

4.node - 工作节点配置

注意,该段命令只在Worker节点执行。

# 只在 worker 节点执行 read -p "请输入K8s的Master节点的IP地址:" MASTER_IP echo "${MASTER_IP} ${APISERVER_NAME}" >> /etc/hosts echo -e "e[32m#只在 master 节点执行以下命令 kubeadm token create --print-join-command 可获取kubeadm join 命令及参数在Node节点运行即可 " echo -e "[注意]:该 token 的有效时间为 24 个小时,24小时内,您可以使用此 token 初始化任意数量的 worker 节点e[0m"

如下命令按照其注释的机器或者节点上执行。

# Master [root@ks8test ~]# kubeadm token create --print-join-command W0616 15:10:45.622701 23160 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io] kubeadm join apiserver.test:6443 --token 5q3zl5.4h2xllxhy7gxccx1 --discovery-token-ca-cert-hash sha256:4cbe428cb3503277be9fbcf3a99de82a97397a624dd94d4270c4eed1b861f951 # Nodes [root@node-1 ~]# ./CentOS7-k8s_init.sh node node-1 请输入K8s的Master节点的IP地址:10.10.107.193 #只在 master 节点执行以下命令 kubeadm token create --print-join-command 可获取kubeadm join 命令及参数在Node节点运行即可 [注意]:该 token 的有效时间为 2 个小时,2小时内,您可以使用此 token 初始化任意数量的 worker 节点 [root@node-1 ~]# kubeadm join apiserver.test:6443 --token 5q3zl5.4h2xllxhy7gxccx1 --discovery-token-ca-cert-hash sha256:4cbe428cb3503277be9fbcf3a99de82a97397a624dd94d4270c4eed1b861f951 [preflight] Running pre-flight checks [preflight] Reading configuration from the cluster... [preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml' [kubelet-start] Downloading configuration for the kubelet from the "kubelet-config-1.18" ConfigMap in the kube-system namespace [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Starting the kubelet [kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap... This node has joined the cluster: * Certificate signing request was sent to apiserver and a response was received. * The Kubelet was informed of the new secure connection details. # Master 运行查看加入的节点 Run 'kubectl get nodes' on the control-plane to see this node join the cluster. [root@ks8test ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION ks8test Ready master 22h v1.18.3 node-1 Ready <none> 67s v1.18.3

0x02 手动安装K8s集群(在线)

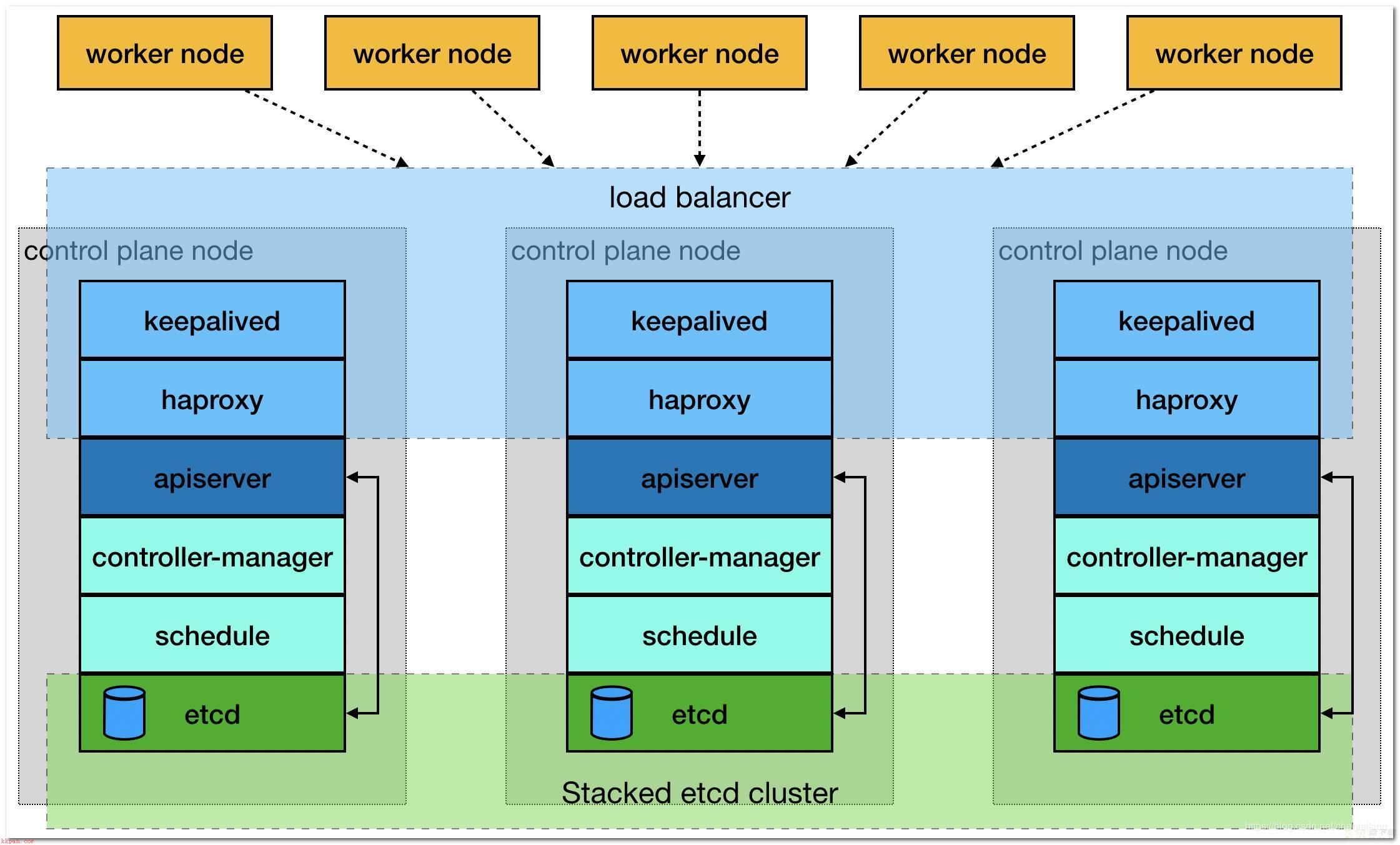

描述:安装K8s高可用集群至少需要三个Master节点和不限制节点数量的工作节点进行组成,否则会出现脑裂的现象;

三个 master 组成主节点集群,通过内网 loader balancer 实现负载均衡多个 worker 组成工作节点集群,通过外网 loader balancer 实现负载均衡

WeiyiGeek.集群架构

集群安装环境说明以及IP地址规划说明:

# 操作系统 CentOS Linux release 7.8.2003 (Core) # 内核版本 5.7.0-1.el7.elrepo.x86_64 # 应用版本 docker 19.03.9 docker-compose 1.25.5 Kubernetes 1.18.4 # 依赖镜像和版本 # docker images | awk -F ' ' '{print $1":"$2}' # REPOSITORY:TAG mirrorgcrio/kube-proxy:v1.18.4 mirrorgcrio/kube-apiserver:v1.18.4 mirrorgcrio/kube-controller-manager:v1.18.4 mirrorgcrio/kube-scheduler:v1.18.4 calico/node:v3.13.1 calico/pod2daemon-flexvol:v3.13.1 calico/cni:v3.13.1 calico/kube-controllers:v3.13.1 mirrorgcrio/pause:3.2 mirrorgcrio/coredns:1.6.7 mirrorgcrio/etcd:3.4.3-0

IP | 主机名称 | 备注 |

|---|---|---|

10.10.107.191 | master-01 | 主Master节点 |

10.10.107.192 | master-02 | 从Master节点 |

10.10.107.193 | master-03 | 从Master节点 |

10.10.107.194 | worker-01 | 工作节点 |

10.10.107.196 | worker-02 | 工作节点 |

监听连接端口:6443 / TCP 后端资源组:包含 master-01,master-02,master-03; 实现 Load Balancer 方式:nginx / haproxy / keepalived / 云供应商提供的负载均衡产品,这里我们暂时不涉及;

1.任意节点建议大于等于centos 版本为 7.6 或 7.7;2.任意节点 CPU 内核数量大于等于 2,且内存大于等于 4G;3.任意节点 hostname 不是 localhost,且不包含下划线、小数点、大写字母并且不能重复;4.任意节点都有固定的内网 IP 地址且为单网卡5.任意节点上 Kubelet使用的 IP 地址 可互通无需 NAT 映射即可相互访问),且没有防火墙、安全组隔离Selinux;6.任意节点上临时的swap分区将被关闭;7.任意节点上初始化时用到的环境变量APISERVER_NAME是一致的,不能是 master 的 hostname并且必须全为小写字母、数字、小数点,不能包含减号;8.任意节点上初始化时用到的环境变量 POD_SUBNET 所使用的网段不能与 master节点/worker节点 所在的网段重叠(常常是一个A类私有地址-CIDR 值)。9.任意的master节点在进行初始化的时候,如果中间出现部署步骤的配置出错,需要重新初始化 master 节点时请先执行 kubeadm reset 操作

操作流程:

1.全部主机都需要执行以下脚本进行基础环境配置与(docker/docker-compose/kubernetes)安装所以需要对其进行自定义的修改配置(主要是:节点主机名称/APISERVER/APIPORT);基础环境:

export HOSTNAME=worker-02 # 临时关闭swap和SELinux swapoff -a && setenforce 0 # 永久关闭swap和SELinux yes | cp /etc/fstab /etc/fstab_bak cat /etc/fstab_bak |grep -v swap > /etc/fstab sed -i 's/^SELINUX=.*$/SELINUX=disabled/' /etc/selinux/config # 主机名设置(这里主机名称安装上面的IP地址规划对应的主机名称-根据安装的主机进行变化) hostnamectl set-hostname $HOSTNAME hostnamectl status # 主机名设置 echo "127.0.0.1 $HOSTNAME" >> /etc/hosts cat >> /etc/hosts <<EOF 10.10.107.191 master-01 10.10.107.192 master-02 10.10.107.193 master-03 10.10.107.194 worker-01 10.10.107.196 worker-02 EOF # 命令自动补齐 echo "source <(kubectl completion bash)" >> ~/.bashrc # DNS 设置 echo -e "nameserver 223.6.6.6 nameserver 192.168.10.254" >> /etc/resolv.conf # 关闭防火墙 systemctl stop firewalld && systemctl disable firewalld # docker 安装配置 (如果已经安装过了则可以跳过) # 安装基础依赖 yum install -y yum-utils lvm2 wget # 安装 nfs-utils 必须先安装 nfs-utils 才能挂载 nfs 网络存储 yum install -y nfs-utils # 添加 docker 镜像仓库 yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo # 查看可用Docker版本以及安装Docker yum list docker-ce --showduplicates | sort -r read -p '请输入需要安装的Docker-ce的版本号(例如:19.03.9):' VERSION yum install -y docker-ce-${VERSION} docker-ce-cli-${VERSION} containerd.io # 安装 Docker-compose curl -L https://get.daocloud.io/docker/compose/releases/download/1.25.5/docker-compose-`uname -s`-`uname -m` > /usr/local/bin/docker-compose chmod +x /usr/local/bin/docker-compose # 镜像源加速配置 # 如果文件夹不存在则建立/etc/docker/ if [[ ! -d "/etc/docker/" ]];then mkdir /etc/docker/;fi cat > /etc/docker/daemon.json <<EOF {"registry-mirrors": ["REPLACE"]} EOF sed -i "s#REPLACE#${REGISTRY_MIRROR}#g" /etc/docker/daemon.json # 启动docker并查看安装后的版本信息 systemctl enable docker && systemctl start docker docker-compose -v && docker info # 修改 /etc/sysctl.conf 进行内核参数的配置 egrep -q "^(#)?net.ipv4.ip_forward.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv4.ip_forward.*|net.ipv4.ip_forward = 1|g" /etc/sysctl.conf || echo "net.ipv4.ip_forward = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.bridge.bridge-nf-call-ip6tables.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.bridge.bridge-nf-call-ip6tables.*|net.bridge.bridge-nf-call-ip6tables = 1|g" /etc/sysctl.conf || echo "net.bridge.bridge-nf-call-ip6tables = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.bridge.bridge-nf-call-iptables.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.bridge.bridge-nf-call-iptables.*|net.bridge.bridge-nf-call-iptables = 1|g" /etc/sysctl.conf || echo "net.bridge.bridge-nf-call-iptables = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.all.disable_ipv6.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.all.disable_ipv6.*|net.ipv6.conf.all.disable_ipv6 = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.all.disable_ipv6 = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.default.disable_ipv6.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.default.disable_ipv6.*|net.ipv6.conf.default.disable_ipv6 = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.default.disable_ipv6 = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.lo.disable_ipv6.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.lo.disable_ipv6.*|net.ipv6.conf.lo.disable_ipv6 = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.lo.disable_ipv6 = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.all.forwarding.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.all.forwarding.*|net.ipv6.conf.all.forwarding = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.all.forwarding = 1" >> /etc/sysctl.conf # 使修改的内核参数立即生效 sysctl -p # 配置K8S的yum源 cat <<'EOF' > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF # 查看安装kubelet、kubeadm、kubectl 指定统一的kubernetes 版本号,例如 1.18.4 yum list kubelet --showduplicates | tail -n 10 yum install -y kubelet-1.18.4 kubeadm-1.18.4 kubectl-1.18.4 # 修改docker Cgroup Driver为systemd # # 将/usr/lib/systemd/system/docker.service文件中的这一行 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock # # 修改为 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --exec-opt native.cgroupdriver=systemd # 如果不修改在添加 worker 节点时可能会碰到如下错误 # [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". # Please follow the guide at https://kubernetes.io/docs/setup/cri/ sed -i "s#^ExecStart=/usr/bin/dockerd.*#ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --exec-opt native.cgroupdriver=systemd#g" /usr/lib/systemd/system/docker.service # 重启 docker,并启动 kubelet systemctl daemon-reload systemctl enable kubelet systemctl restart docker && systemctl restart kubelet

2.仅在主Master节点(10.10.107.191)上进行Master节点初始化操作(该节点也是接入集群使用的ip);

APISERVER_IP=10.10.107.191 APISERVER_NAME=k8s.weiyigeek.top APISERVER_PORT=6443 SERVICE_SUBNET=10.99.0.0/16 # calico 缺省子网 POD_SUBNET=10.100.0.1/16 echo "${APISERVER_IP} ${APISERVER_NAME}" >> /etc/hosts # 初始化配置(建议各个组件的版本与k8s的版本一致) rm -f ./kubeadm-config.yaml cat <<EOF > ./kubeadm-config.yaml apiVersion: kubeadm.k8s.io/v1beta2 kind: ClusterConfiguration kubernetesVersion: v${K8SVERSION} imageRepository: mirrorgcrio #imageRepository: registry.aliyuncs.com/google_containers #imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers #imageRepository: gcr.azk8s.cn/google_containers controlPlaneEndpoint: "${APISERVER_NAME}:${APISERVER_PORT}" networking: serviceSubnet: "${SERVICE_SUBNET}" podSubnet: "${POD_SUBNET}" dnsDomain: "cluster.local" EOF # kubeadm init 根据您服务器网速的情况,您需要等候 3 - 10 分钟 kubeadm init --config=kubeadm-config.yaml --upload-certs # 配置 kubectl否则不能执行kubectl get pods -A mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config # 安装 calico 网络插件 # 参考文档 https://docs.projectcalico.org/v3.13/getting-started/kubernetes/self-managed-onprem/onpremises rm -f calico-3.13.1.yaml wget -L https://kuboard.cn/install-script/calico/calico-3.13.1.yaml kubectl apply -f calico-3.13.1.yaml

执行结果:

# (1) 执行如下命令,等待 3-10 分钟,直到所有的容器组处于 Running 状态 watch kubectl get pod -n kube-system -o wide # NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES # calico-kube-controllers-5b8b769fcd-ns9r4 1/1 Running 0 6m 10.100.184.65 master-01 <none> <none> # calico-node-bg2g9 1/1 Running 0 6m 10.10.107.191 master-01 <none> <none> # coredns-54f99b968c-2tqc4 1/1 Running 0 6m 10.100.184.67 master-01 <none> <none> # coredns-54f99b968c-672zn 1/1 Running 0 6m 10.100.184.66 master-01 <none> <none> # etcd-master-01 1/1 Running 0 6m 10.10.107.191 master-01 <none> <none> # kube-apiserver-master-01 1/1 Running 0 6m 10.10.107.191 master-01 <none> <none> # kube-controller-manager-master-01 1/1 Running 0 6m 10.10.107.191 master-01 <none> <none> # kube-proxy-trg7v 1/1 Running 0 6m 10.10.107.191 master-01 <none> <none> # kube-scheduler-master-01 1/1 Running 0 6m 10.10.107.191 master-01 <none> <none> # (2) 此时主节点的状态应该为Ready kubectl get node -o wide # NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME # master-01 Ready master 7m v1.18.4 10.10.107.191 <none> CentOS Linux 7 (Core) 5.7.0-1.el7.elrepo.x86_64 docker://19.3.9 # (3) 下载的镜像信息 docker images # REPOSITORY TAG IMAGE ID CREATED SIZE # mirrorgcrio/kube-proxy v1.18.4 718fa77019f2 5 days ago 117MB # mirrorgcrio/kube-apiserver v1.18.4 408913fc18eb 5 days ago 173MB # mirrorgcrio/kube-scheduler v1.18.4 c663567f869e 5 days ago 95.3MB # mirrorgcrio/kube-controller-manager v1.18.4 e8f1690127c4 5 days ago 162MB # calico/node v3.13.1 2e5029b93d4a 3 months ago 260MB # calico/pod2daemon-flexvol v3.13.1 e8c600448aae 3 months ago 111MB # calico/cni v3.13.1 6912ec2cfae6 3 months ago 207MB # calico/kube-controllers v3.13.1 3971f13f2c6c 3 months ago 56.6MB # mirrorgcrio/pause 3.2 80d28bedfe5d 4 months ago 683kB # mirrorgcrio/coredns 1.6.7 67da37a9a360 4 months ago 43.8MB # mirrorgcrio/etcd 3.4.3-0 303ce5db0e90 8 months ago 288MB # (4) 现在应该将pod网络部署到集群部署calico 插件(安装集群网络) kubectl apply -f calico-3.13.1.yaml # configmap/calico-config created # customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created # customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created # clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created # clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created # clusterrole.rbac.authorization.k8s.io/calico-node created # clusterrolebinding.rbac.authorization.k8s.io/calico-node created # daemonset.apps/calico-node created # serviceaccount/calico-node created # deployment.apps/calico-kube-controllers created # serviceaccount/calico-kube-controllers Created

注意:请等到所有容器组(大约9个)全部处于 Running 状态,才进行下一步

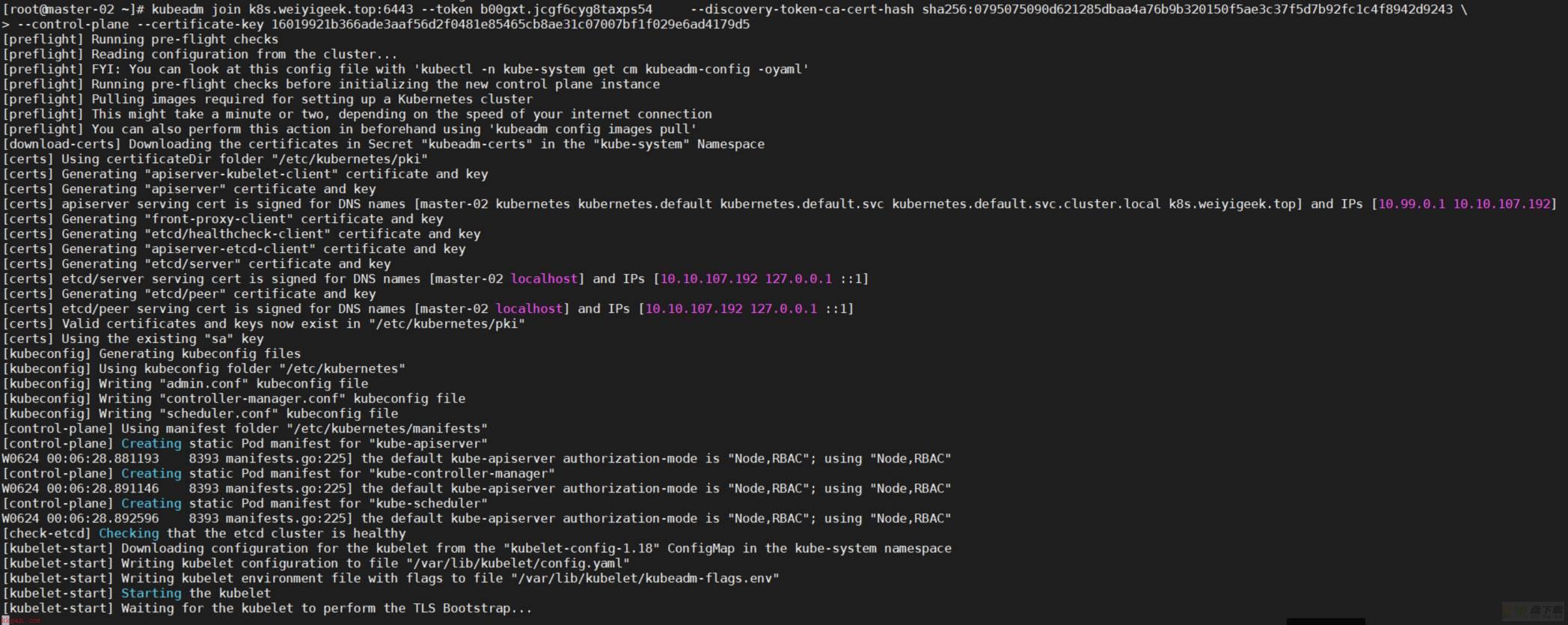

3.在其余两台从Master节点上运行第二条命令便会加入到master集群之中,但是执行下面(1) (2)前我们需要将使用到的镜像进行下载;# (0) 由于国内无法访问k8s.gcr.io则在进行从master节点初始化时候会一直卡在加入控制平面节点命令后,一直到超时时间; # 解决办法: 从Docker官方默认镜像平台拉取镜像并重新打tag的方式来绕过对 k8s.gcr.io 的访问。 kubeadm config images pull --image-repository mirrorgcrio # [config/images] Pulled mirrorgcrio/kube-apiserver:v1.18.4 # [config/images] Pulled mirrorgcrio/kube-controller-manager:v1.18.4 # [config/images] Pulled mirrorgcrio/kube-scheduler:v1.18.4 # [config/images] Pulled mirrorgcrio/kube-proxy:v1.18.4 # [config/images] Pulled mirrorgcrio/pause:3.2 # [config/images] Pulled mirrorgcrio/etcd:3.4.3-0 # [config/images] Pulled mirrorgcrio/coredns:1.6.7 kubeadm config images list --image-repository mirrorgcrio > gcr.io.log # 重新为镜像打上tag为 k8s.gcr.io镜像名称:版本 sed -e "s#(/.*$)#1 k8s.gcr.io1#g" gcr.io.log > gcr.io.log1 while read k8sgcrio;do docker tag ${k8sgcrio} done < gcr.io.log1 # 删除tag带有mirrorgcrio while read k8s;do docker rmi ${k8s} done < gcr.io.log # 最后的效果 $docker images # REPOSITORY TAG IMAGE ID CREATED SIZE # k8s.gcr.io/kube-proxy v1.18.4 718fa77019f2 6 days ago 117MB # k8s.gcr.io/kube-scheduler v1.18.4 c663567f869e 6 days ago 95.3MB # k8s.gcr.io/kube-apiserver v1.18.4 408913fc18eb 6 days ago 173MB # k8s.gcr.io/kube-controller-manager v1.18.4 e8f1690127c4 6 days ago 162MB # k8s.gcr.io/pause 3.2 80d28bedfe5d 4 months ago 683kB # k8s.gcr.io/coredns 1.6.7 67da37a9a360 4 months ago 43.8MB # k8s.gcr.io/etcd 3.4.3-0 303ce5db0e90 8 months ago 288MB # (1) APIServer进行主MasterIP以及Server名称配置 APISERVER_IP=10.10.107.191 APISERVER_NAME=k8s.weiyigeek.top echo "${APISERVER_IP} ${APISERVER_NAME}" >> /etc/hosts # (2) 从Master节点加入控制平面节点(certificate-key) 两个小时后失效 kubeadm join k8s.weiyigeek.top:6443 --token opcpye.79zeofy6eo4h9ag6 --discovery-token-ca-cert-hash sha256:0795075090d621285dbaa4a76b9b320150f5ae3c37f5d7b92fc1c4f8942d9243 --control-plane --certificate-key 6dbee003011ac1dae15ae1fad3014ac8b568d154387aa0c43663d5fc47a109c4 # (3) 拷贝kubernetes配置文件到用户家目录中(如果不执行则kubectl get 资源会出错) mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

WeiyiGeek.从Master节点

4.其余两台Node节点上运行kubeadm join命令;

# (1) APIServer进行主MasterIP以及Server名称配置 APISERVER_IP=10.10.107.191 APISERVER_NAME=k8s.weiyigeek.top echo "${APISERVER_IP} ${APISERVER_NAME}" >> /etc/hosts # (2) 将worker工作节点加入到受Master节点管理的集群中; kubeadm join k8s.weiyigeek.top:6443 --token opcpye.79zeofy6eo4h9ag6 --discovery-token-ca-cert-hash sha256:0795075090d621285dbaa4a76b9b320150f5ae3c37f5d7b92fc1c4f8942d9243

5.在K8s集群中配置etcd的cluster,修改etcd.yaml文件中的–initial-cluster参数保证三台Master节点机器都是加入到etcd集群中的;

# 所有 Master 节点机器配置如下: [root@master-01 ~]$ grep -n "initial-cluster" /etc/kubernetes/manifests/etcd.yaml 21: - --initial-cluster=master-01=https://10.10.107.191:2380,master-03=https://10.10.107.193:2380,master-02=https://10.10.107.192:2380 [root@master-02 ~]$ grep -n "initial-cluster" /etc/kubernetes/manifests/etcd.yaml 21: - --initial-cluster=master-01=https://10.10.107.191:2380,master-02=https://10.10.107.192:2380,master-03=https://10.10.107.193:2380 22: - --initial-cluster-state=existing [root@master-03 ~]$ grep -n "initial-cluster" /etc/kubernetes/manifests/etcd.yaml 21: - --initial-cluster=master-01=https://10.10.107.191:2380,master-03=https://10.10.107.193:2380,master-02=https://10.10.107.192:2380 22: - --initial-cluster-state=existing # 其后再修改 kube-apiserver etcd 连接为集群中各个节点ip [root@master-01 ~]$ grep -n "etcd-servers" /etc/kubernetes/manifests/kube-apiserver.yaml 25: - --etcd-servers=https://10.10.107.191:2379,https://10.10.107.192:2379,https://10.10.107.193:2379 [root@master-02 ~]$ grep -n "etcd-servers" /etc/kubernetes/manifests/kube-apiserver.yaml 25: - --etcd-servers=https://10.10.107.191:2379,https://10.10.107.192:2379,https://10.10.107.193:2379 [root@master-03 ~]$ grep -n "etcd-servers" /etc/kubernetes/manifests/kube-apiserver.yaml 25: - --etcd-servers=https://10.10.107.191:2379,https://10.10.107.192:2379,https://10.10.107.193:2379

5.验证master集群是否部署正常

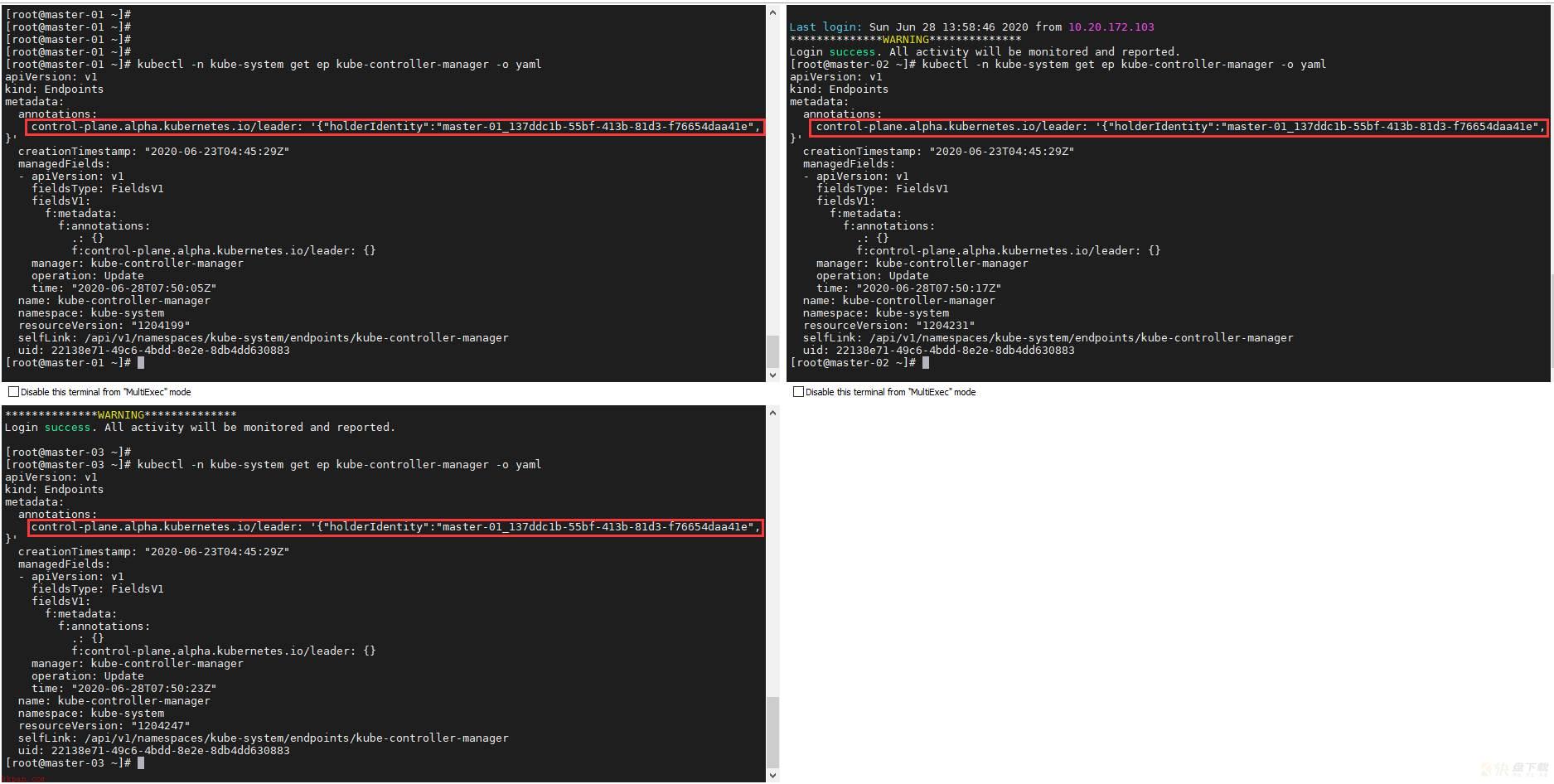

kubectl get nodes -o wide # NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME # master-01 Ready master 5d1h v1.18.4 10.10.107.191 <none> CentOS Linux 7 (Core) 5.7.0-1.el7.elrepo.x86_64 docker://19.3.9 # master-02 Ready master 4d13h v1.18.4 10.10.107.192 <none> CentOS Linux 7 (Core) 5.7.0-1.el7.elrepo.x86_64 docker://19.3.9 # master-03 Ready master 4d4h v1.18.4 10.10.107.193 <none> CentOS Linux 7 (Core) 5.7.0-1.el7.elrepo.x86_64 docker://19.3.9 # worker-01 Ready <none> 5d1h v1.18.4 10.10.107.194 <none> CentOS Linux 7 (Core) 5.7.0-1.el7.elrepo.x86_64 docker://19.3.9 # worker-02 Ready <none> 4d14h v1.18.4 10.10.107.196 <none> CentOS Linux 7 (Core) 5.7.0-1.el7.elrepo.x86_64 docker://19.3.9 kubectl get pods -A -o wide # NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES # default helloworld 0/1 CrashLoopBackOff 1089 3d21h 10.100.37.193 worker-02 <none> <none> # kube-system calico-kube-controllers-5b8b769fcd-ns9r4 1/1 Running 0 5d1h 10.100.184.65 master-01 <none> <none> # kube-system calico-node-8rn2s 1/1 Running 0 4d4h 10.10.107.193 master-03 <none> <none> # kube-system calico-node-bg2g9 1/1 Running 0 5d1h 10.10.107.191 master-01 <none> <none> # kube-system calico-node-d2vqd 1/1 Running 0 4d13h 10.10.107.196 worker-02 <none> <none> # kube-system calico-node-n48dt 1/1 Running 0 4d13h 10.10.107.192 master-02 <none> <none> # kube-system calico-node-whznq 1/1 Running 1 5d1h 10.10.107.194 worker-01 <none> <none> # kube-system coredns-54f99b968c-2tqc4 1/1 Running 0 5d1h 10.100.184.67 master-01 <none> <none> # kube-system coredns-54f99b968c-672zn 1/1 Running 0 5d1h 10.100.184.66 master-01 <none> <none> # kube-system etcd-master-01 1/1 Running 0 4d2h 10.10.107.191 master-01 <none> <none> # kube-system etcd-master-02 1/1 Running 0 4d2h 10.10.107.192 master-02 <none> <none> # kube-system etcd-master-03 1/1 Running 0 4d4h 10.10.107.193 master-03 <none> <none> # kube-system kube-apiserver-master-01 1/1 Running 0 4d2h 10.10.107.191 master-01 <none> <none> # kube-system kube-apiserver-master-02 1/1 Running 0 4d2h 10.10.107.192 master-02 <none> <none> # kube-system kube-apiserver-master-03 1/1 Running 0 4d2h 10.10.107.193 master-03 <none> <none> # kube-system kube-controller-manager-master-01 1/1 Running 3 5d1h 10.10.107.191 master-01 <none> <none> # kube-system kube-controller-manager-master-02 1/1 Running 2 4d13h 10.10.107.192 master-02 <none> <none> # kube-system kube-controller-manager-master-03 1/1 Running 1 4d4h 10.10.107.193 master-03 <none> <none> # kube-system kube-proxy-5jjql 1/1 Running 0 4d13h 10.10.107.196 worker-02 <none> <none> # kube-system kube-proxy-7ln9t 1/1 Running 1 5d1h 10.10.107.194 worker-01 <none> <none> # kube-system kube-proxy-8x257 1/1 Running 0 4d4h 10.10.107.193 master-03 <none> <none> # kube-system kube-proxy-gbm52 1/1 Running 0 4d13h 10.10.107.192 master-02 <none> <none> # kube-system kube-proxy-trg7v 1/1 Running 0 5d1h 10.10.107.191 master-01 <none> <none> # kube-system kube-scheduler-master-01 1/1 Running 1 5d1h 10.10.107.191 master-01 <none> <none> # kube-system kube-scheduler-master-02 1/1 Running 3 4d13h 10.10.107.192 master-02 <none> <none> # kube-system kube-scheduler-master-03 1/1 Running 2 4d4h 10.10.107.193 master-03 <none> <none> # 组件健康信息查看 [root@master-01 ~]$ kubectl get cs NAME STATUS MESSAGE ERROR scheduler Healthy ok controller-manager Healthy ok etcd-1 Healthy {"health":"true"} etcd-2 Healthy {"health":"true"} etcd-0 Healthy {"health":"true"} [root@master-02 ~]$ kubectl get cs NAME STATUS MESSAGE ERROR scheduler Healthy ok controller-manager Healthy ok etcd-2 Healthy {"health":"true"} etcd-0 Healthy {"health":"true"} etcd-1 Healthy {"health":"true"} [root@master-03 ~]$ kubectl get cs NAME STATUS MESSAGE ERROR controller-manager Healthy ok scheduler Healthy ok etcd-2 Healthy {"health":"true"} etcd-1 Healthy {"health":"true"} etcd-0 Healthy {"health":"true"} # 配置信息查看 kubectl get cm kubeadm-config -n kube-system -o yaml #选举信息查看 kubectl get ep kube-controller-manager -n kube-system -o yaml

WeiyiGeek.选举查看

6.移除 worker 节点

#(1)在准备移除的 worker 节点上执行 kubeadm reset #在第一个 master 节点 master-01 上执行,worker 节点的名字可以通过执行 kubectl get nodes 命令获得; kubectl delete node worker-02

7.至此一个简单的K8s集群就搭建完毕,最后再补充一点关于token失效的问题采用以下命令搞定,需要在主Master节点上运行命令;

# (1)查看token是否失效默认是24H kubeadm token list # TOKEN TTL EXPIRES USAGES DESCRIPTION EXTRA GROUPS # opcpye.79zeofy6eo4h9ag6 13h 2020-06-24T12:45:29+08:00 authentication,signing <none> system:bootstrappers:kubeadm:default-node-token ## 方式(1) ## # (2)从节点的 kubeadm 加入到k8s集群之中 (推荐),使用此命令调用init工作流的单个阶段 kubeadm init phase upload-certs --upload-certs # [upload-certs] Using certificate key: # 70eb87e62f052d2d5de759969d5b42f372d0ad798f98df38f7fe73efdf63a13c kubeadm token create --print-join-command # kubeadm join apiserver.demo:6443 --token bl80xo.hfewon9l5jlpmjft --discovery-token-ca-cert-hash sha256:b4d2bed371fe4603b83e7504051dcfcdebcbdcacd8be27884223c4ccc13059a4 # 则进行组合后的第二、三个 master 节点的 join 命令如下: kubeadm join apiserver.demo:6443 --token ejwx62.vqwog6il5p83uk7y --discovery-token-ca-cert-hash sha256:6f7a8e40a810323672de5eee6f4d19aa2dbdb38411845a1bf5dd63485c43d303 --control-plane --certificate-key 70eb87e62f052d2d5de759969d5b42f372d0ad798f98df38f7fe73efdf63a13c # (3)工作节点加入直接执行上面打印出的token kubeadm join apiserver.demo:6443 --token bl80xo.hfewon9l5jlpmjft --discovery-token-ca-cert-hash sha256:b4d2bed371fe4603b83e7504051dcfcdebcbdcacd8be27884223c4ccc13059a4 ## 方式(2) ## # 1) 如果失效可以重新进行生成token过期后生成 kubeadm token create # 2q41vx.w73xe9nrlqdujawu ##此处是新token # 2) 获取CA(证书)公钥哈希值 openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^ .* //' # (stdin)= 43c8b7186efa9c68002aca3d4eed56fbc9e200c8550071a3dd1db99a10445713 ### 此处是公钥哈希值(一台机器上证书不变就一直是该sha256的值) # (3) 节点加入集群 kubeadm join 192.168.80.137:6443 --token 新生成的Token填写此处 --discovery-token-ca-cert-hash sha256:获取的公钥哈希值填写此处 #kubeadm join apiserver.demo:6443 --token 2q41vx.w73xe9nrlqdujawu --discovery-token-ca-cert-hash sha256:43c8b7186efa9c68002aca3d4eed56fbc9e200c8550071a3dd1db99a10445713

1) 只有在Master节点才能执行查看node以及pod相关信息;

2) 如果主Master节点在初始化时候出错需要重新配置时候请执行以下命令进行重置;

systemctl stop kubelet docker stop $(docker ps -aq) docker rm -f $(docker ps -aq) systemctl stop docker kubeadm reset sudo rm -rf $HOME/.kube /etc/kubernetes sudo rm -rf /var/lib/cni/ /etc/cni/ /var/lib/kubelet/* iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X systemctl start docker systemctl start kubelet

3) 如果加入主master节点时一直停留在 pre-flight 状态,请在第二、三个节点上执行命令检查:curl -ik https://设置APISERVER:6443/version

#正常状态 $curl -ik https://k8s.weiyigeek.top:6443/version HTTP/1.1 200 OK Cache-Control: no-cache, private Content-Type: application/json Date: Wed, 24 Jun 2020 02:16:23 GMT Content-Length: 263 { "major": "1", "minor": "18", "gitVersion": "v1.18.4", "gitCommit": "c96aede7b5205121079932896c4ad89bb93260af", "gitTreeState": "clean", "buildDate": "2020-06-17T11:33:59Z", "goVersion": "go1.13.9", "compiler": "gc", "platform": "linux/amd64" }

0x03 手动安装K8s集群(离线)

描述:离线安装K8s即在机器没有连接外网的情况进行进行K8S集群的安装; 安装方式两种:

1.离线安装工具sealos

纯golang开发,只需一个二进制,无任何依赖 内核本地负载,不依赖haproxy keepalived等 不依赖ansible 99年证书 支持自定义配置安装 工具与资源包分离,离线安装,安装不同版本仅需要更换不同资源包即可 支持ingress kuboard prometheus等APP(addons)安装

2.自建一个系统模板的软件仓库以及docker镜像仓库harbor;

基础要求:

1.系统推荐CentOS7.6以上,内核推荐4.14以上,CPU节点配置不低于2核4G;2.有机器 root 用户密码一致(如不一致也可以使用 ssh 密钥)1.半自动离线安装

描述:对于半自动离线进行kubernetes的安装,我们采用离线下载镜像以及搭建本地内部yum仓库服务器,我们需要进行一下的准备工作;

(1) 基础操作系统安装镜像: CentOS Linux release 7.8.2003 (Core) - 5.7.0-1.el7.elrepo.x86_64

(2) 内网yum仓库建立下载Kubernetes相关的安装包即:kubelet-1.18.4 kubeadm-1.18.4 kubectl-1.18.4

(3) Docker相关环境下载kubernetes相关功能组件进行打包(后面建议采用harbor镜像仓库):

k8s.gcr.io/kube-apiserver:v1.18.4 k8s.gcr.io/kube-controller-manager:v1.18.4 k8s.gcr.io/kube-scheduler:v1.18.4 k8s.gcr.io/kube-proxy:v1.18.4 k8s.gcr.io/pause:3.2 k8s.gcr.io/etcd:3.4.3-0 k8s.gcr.io/coredns:1.6.7

(4) 简易的web应用:Nginx或者httpd环境

(5) 准备几台相关配置的机器并且设置不同的机器名称;

k8s.gcr.io镜像下载方法:

1.机器不能访问k8s.gcr.io情况下

## 镜像下载方法(1) # 方式1:kubeadm config images list > gcr.io.log && sed -i 's#k8s.gcr.io#mirrorgcrio#g' gcr.io.log # 方式2: kubeadm config images list --image-repository mirrorgcrio > gcr.io.log # 注意该for读取行默认以空格作为结束分隔符 # for k8s in $(cat gcr.io.log);do docker pull ${k8s}; done # sed -e "s#(/.*$)#1 k8s.gcr.io1#g" gcr.io.log > gcr.io.log1 # while read k8sgcrio;do docker tag ${k8sgcrio}; done < gcr.io.log1 # for k8s in $(cat gcr.io.log);do docker rmi ${k8s}; done ## 镜像下载与tag打包方法(2)- 推荐 K8SVERSION=1.18.5 kubeadm config images list --kubernetes-version=${K8SVERSION} 2>/dev/null | sed 's/k8s.gcr.io/docker pull mirrorgcrio/g' | sudo sh kubeadm config images list --kubernetes-version=${K8SVERSION} 2>/dev/null | sed 's/k8s.gcr.io(.*)/docker tag mirrorgcrio1 k8s.gcr.io1/g' | sudo sh kubeadm config images list --kubernetes-version=${K8SVERSION} 2>/dev/null | sed 's/k8s.gcr.io/docker image rm mirrorgcrio/g' | sudo sh docker save -o v${K8SVERSION}.tar $(docker images | grep -v TAG | cut -d ' ' -f1) gzip v${K8SVERSION}.tar v${K8SVERSION}.tar.gz

2.机器能访问k8s.gcr.io时建议,将所需版本的镜像 pull 下来然后 save 成 tar 包传回本地或者harbor之中。

#!/bin/bash # for: use kubeadm pull kubernetes images # date: 2019-08-15 set -xue apt-get update && apt-get install -y apt-transport-https curl curl -s https://packages.cloud.google.com/apt/doc/apt-key.gpg | apt-key add - cat <<EOF >/etc/apt/sources.list.d/kubernetes.list deb https://apt.kubernetes.io/ kubernetes-xenial main EOF apt-get update for version in 1.17.4 do apt install kubeadm=${version}-00 mkdir -p ${version} kubeadm config images pull --kubernetes-version=${version} docker save -o v${version}.tar $(docker images | grep -v TAG | grep k8s.gcr.io | cut -d ' ' -f1) gzip v${version}.tar v${version}.tar.gz done

基础流程: Step1.本地内部yum仓库搭建(相关环境的依赖包下载)

## 全局变量 export K8SVERSION="1.18.5" export REGISTRY_MIRROR="https://xlx9erfu.mirror.aliyuncs.com" ## 系统基础设置 hostnamectl set-hostname k8s-yum-server && echo "127.0.0.1 k8s-yum-server" >> /etc/hosts setenforce 0 && getenforce && hostnamectl status ## 应用基础设置 sed -i "s#keepcache=0#keepcache=1#g" /etc/yum.conf && echo -e "缓存目录:" && grep "cachedir" /etc/yum.conf if [[ ! -d "/etc/docker/" ]];then mkdir /etc/docker/;fi cat > /etc/docker/daemon.json <<EOF {"registry-mirrors": ["REPLACE"]} EOF sed -i "s#REPLACE#${REGISTRY_MIRROR}#g" /etc/docker/daemon.json systemctl daemon-reload systemctl restart docker kubelet ## 应用安装设置 # 安装基础依赖 yum install -y yum-utils lvm2 wget nfs-utils # 添加 docker 镜像仓库 yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo cat <<'EOF' > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF yum list docker-ce --showduplicates | sort -r read -p '请输入需要安装的Docker-ce的版本号(例如:19.03.9):' VERSION yum install -y docker-ce-${VERSION} docker-ce-cli-${VERSION} containerd.io yum list kubeadm --showduplicates | sort -r # createrepo 与 httpd 是建立内部仓库必须的软件 yum install -y kubelet-${K8SVERSION} kubeadm-${K8SVERSION} kubectl-${K8SVERSION} httpd createrepo # 安装指定版本的 docker-ce 和 kubelet、kubeadm # yum install docker-ce-19.03.3-3.el7 kubelet-1.17.4-0 kubeadm-1.17.4-0 kubectl-1.17.4-0 --disableexcludes=kubernetes

Step2.下载K8s.gcr.io中的镜像到本地并且进行打包

## Docker下载K8s.gcr.io镜像 kubeadm config images list --kubernetes-version=${K8SVERSION} 2>/dev/null | sed 's/k8s.gcr.io/docker pull mirrorgcrio/g' | sudo sh kubeadm config images list --kubernetes-version=${K8SVERSION} 2>/dev/null | sed 's/k8s.gcr.io(.*)/docker tag mirrorgcrio1 k8s.gcr.io1/g' | sudo sh kubeadm config images list --kubernetes-version=${K8SVERSION} 2>/dev/null | sed 's/k8s.gcr.io/docker image rm mirrorgcrio/g' | sudo sh docker save -o v${K8SVERSION}.tar $(docker images | grep -v TAG | cut -d ' ' -f1) # 减少镜像打包后的体积 gzip v${K8SVERSION}.tar v${K8SVERSION}.tar.gz

Step3.将yum缓存下载的rpm以及k8s打包后的镜像放在httpd应用服务访问目录里即/var/www/html/,然后进行生成内部yum数据库和信息索引文件;

mv /etc/httpd/conf.d/welcome.conf{,.bak} mkdir /var/www/html/yum/ find /var/cache/yum -name *.rpm -exec cp -a {} /var/www/html/yum/ ; # 权限非常重要否则后面下载提示权限不足 cp v${K8SVERSION}.tar.gz /var/www/html/yum/ && chmod +644 /var/www/html/yum/v${K8SVERSION}.tar.gz # 生成内部yum数据库和信息索引文件 createrepo -pdo /var/www/html/yum/ /var/www/html/yum/ createrepo --update /var/www/html/yum/

Step4.内部yum仓库的httpd服务启动和防火墙设置

firewall-cmd --add-port=80/tcp --permanent firewall-cmd --reload systemctl start httpd

Step5.利用模板克隆一台机器出来验证内部仓库是否配置成功可以正常进行软件安装

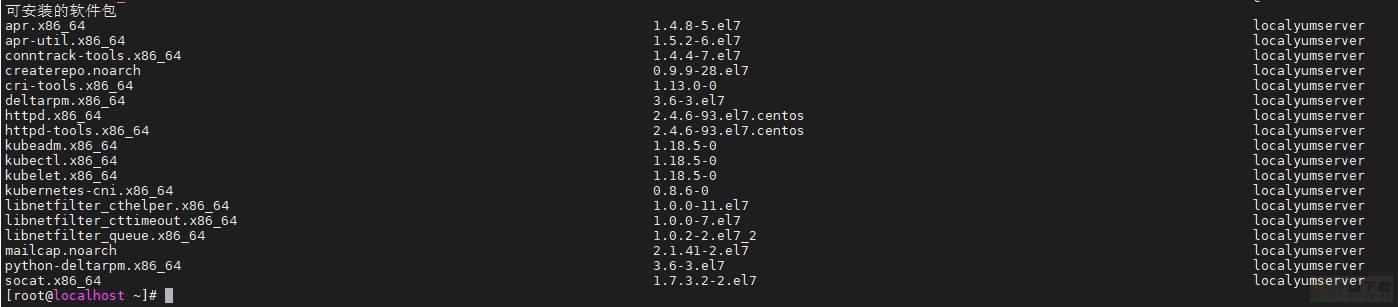

echo "10.10.107.201 yum.weiyigeek.top" >> /etc/hosts cat > /etc/yum.repos.d/localyumserver.repo <<END [localyumserver] name=localyumserver baseurl=http://yum.weiyigeek.top/yum/ enabled=1 gpgcheck=0 END yum --enablerepo=localyumserver --disablerepo=base,extras,updates,epel,elrepo,docker-ce-stable list

如果正常显示以下则说明创建成功,否则请参考报错信息进行相应的调整;

WeiyiGeek.localyumserver

Step6.在这台克隆机上进行安装K8s基础环境的设置

export HOSTNAME=worker-03 # 临时关闭swap和SELinux swapoff -a && setenforce 0 # 永久关闭swap和SELinux yes | cp /etc/fstab /etc/fstab_bak cat /etc/fstab_bak |grep -v swap > /etc/fstab sed -i "s/^SELINUX=.*$/SELINUX=disabled/" /etc/selinux/config # 主机名设置(这里主机名称安装上面的IP地址规划对应的主机名称-根据安装的主机进行变化) hostnamectl set-hostname $HOSTNAME hostnamectl status # 主机名设置 echo "127.0.0.1 $HOSTNAME" >> /etc/hosts cat >> /etc/hosts <<EOF 10.10.107.191 master-01 10.10.107.192 master-02 10.10.107.193 master-03 10.10.107.194 worker-01 10.10.107.196 worker-02 10.20.172.200 worker-03 EOF # 修改 /etc/sysctl.conf 进行内核参数的配置 egrep -q "^(#)?net.ipv4.ip_forward.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv4.ip_forward.*|net.ipv4.ip_forward = 1|g" /etc/sysctl.conf || echo "net.ipv4.ip_forward = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.bridge.bridge-nf-call-ip6tables.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.bridge.bridge-nf-call-ip6tables.*|net.bridge.bridge-nf-call-ip6tables = 1|g" /etc/sysctl.conf || echo "net.bridge.bridge-nf-call-ip6tables = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.bridge.bridge-nf-call-iptables.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.bridge.bridge-nf-call-iptables.*|net.bridge.bridge-nf-call-iptables = 1|g" /etc/sysctl.conf || echo "net.bridge.bridge-nf-call-iptables = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.all.disable_ipv6.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.all.disable_ipv6.*|net.ipv6.conf.all.disable_ipv6 = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.all.disable_ipv6 = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.default.disable_ipv6.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.default.disable_ipv6.*|net.ipv6.conf.default.disable_ipv6 = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.default.disable_ipv6 = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.lo.disable_ipv6.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.lo.disable_ipv6.*|net.ipv6.conf.lo.disable_ipv6 = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.lo.disable_ipv6 = 1" >> /etc/sysctl.conf egrep -q "^(#)?net.ipv6.conf.all.forwarding.*" /etc/sysctl.conf && sed -ri "s|^(#)?net.ipv6.conf.all.forwarding.*|net.ipv6.conf.all.forwarding = 1|g" /etc/sysctl.conf || echo "net.ipv6.conf.all.forwarding = 1" >> /etc/sysctl.conf # 使修改的内核参数立即生效 sysctl -p # 镜像加速 export REGISTRY_MIRROR="https://xlx9erfu.mirror.aliyuncs.com" if [[ ! -d "/etc/docker/" ]];then mkdir /etc/docker/;fi cat > /etc/docker/daemon.json <<EOF {"registry-mirrors": ["REPLACE"]} EOF sed -i "s#REPLACE#${REGISTRY_MIRROR}#g" /etc/docker/daemon.json

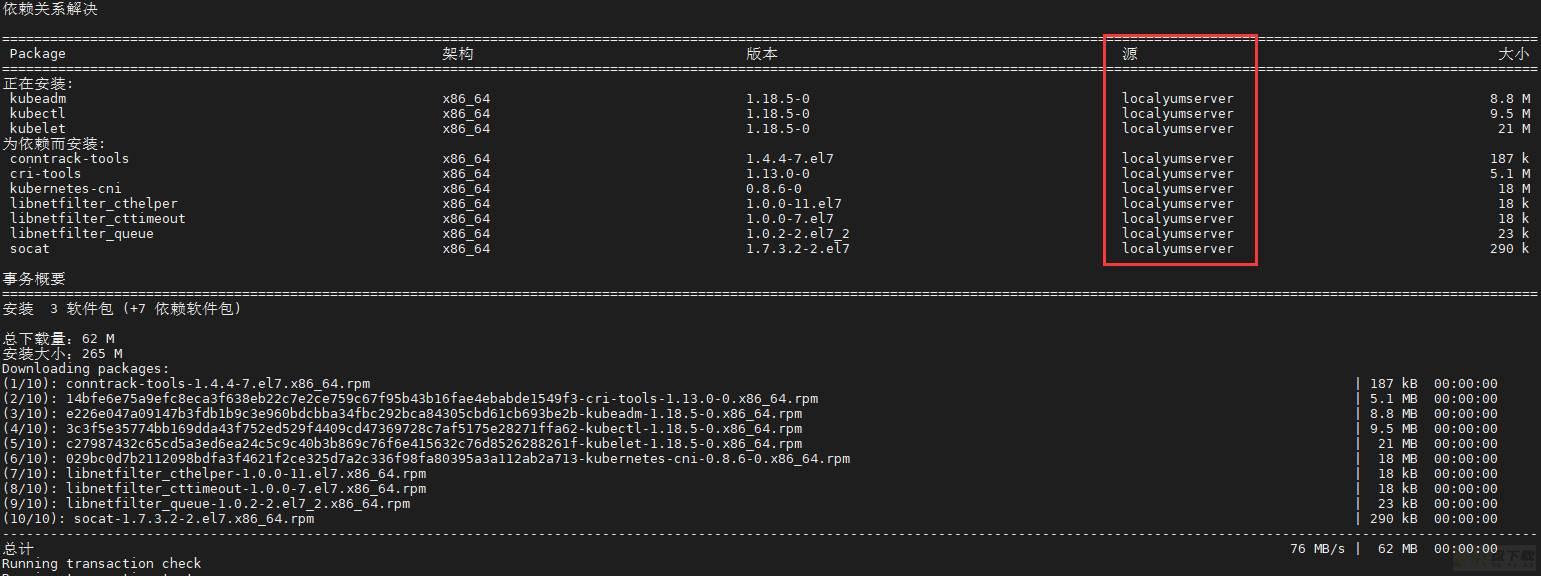

Step7.利用内部yum源进行Kuberntes环境安装

yum install -y --enablerepo=localyumserver --disablerepo=base,extras,updates,epel,elrepo,docker-ce-stable kubelet kubeadm kubectl # docker启动设置 sed -i "s#^ExecStart=/usr/bin/dockerd.*#ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --exec-opt native.cgroupdriver=systemd#g" /usr/lib/systemd/system/docker.service # 重启 docker,并启动 kubelet systemctl daemon-reload && systemctl enable kubelet systemctl restart docker kubelet

WeiyiGeek.kube相关命令安装常规

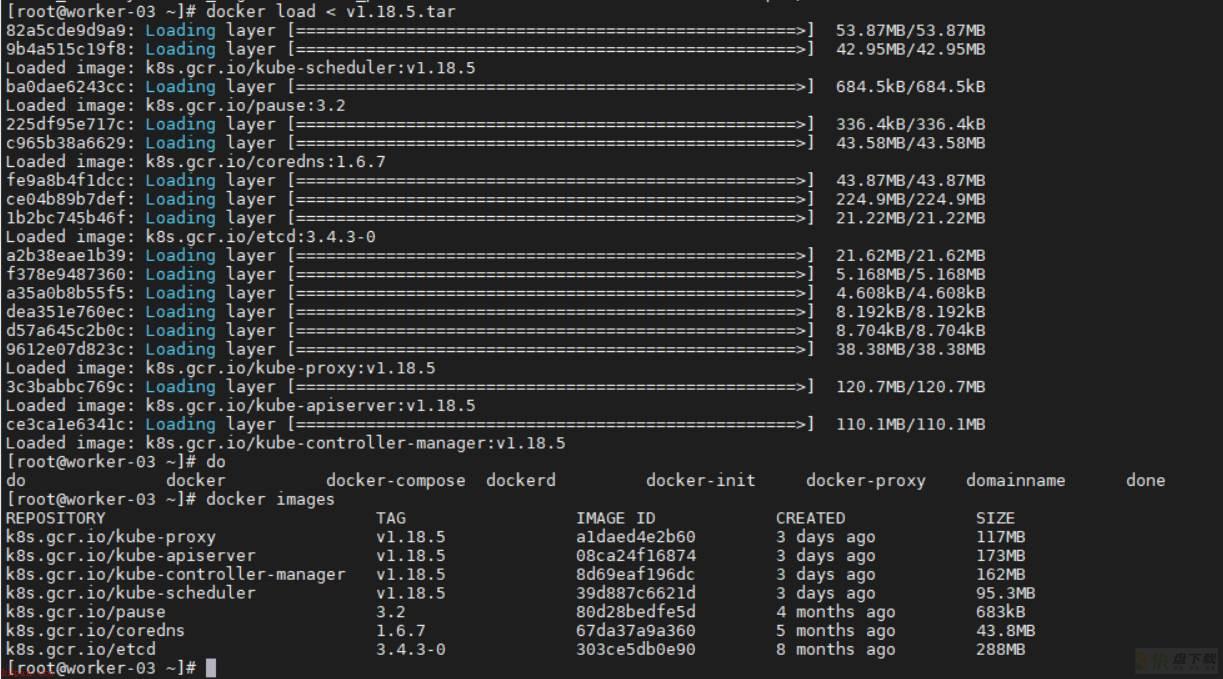

Step8.将yum仓库中的镜像拉取本地部署机器上后,再使用 docker load 命令将镜像导入到宿主机 docker 镜像存储中。

wget -c http://10.10.107.201/yum/v1.18.5.tar.gz gzip -dv v1.18.5.tar.gz && docker load < v1.18.5.tar

WeiyiGeek.镜像导入结果

Step9.将工作节点加入到集群之中

# (1) 主Master节点运行 [root@master-01 ~]$kubeadm token create --print-join-command 2>/dev/null kubeadm join k8s.weiyigeek.top:6443 --token fvu5ei.akiiuhywibwxvdwh --discovery-token-ca-cert-hash sha256:0795075090d621285dbaa4a76b9b320150f5ae3c37f5d7b92fc1c4f8942d9243 # (2) 工作节点执行加入到k8s的cluster中 APISERVER_IP=10.10.107.191 APISERVER_NAME=k8s.weiyigeek.top echo "${APISERVER_IP} ${APISERVER_NAME}" >> /etc/hosts [root@worker-03 ~]$kubeadm join k8s.weiyigeek.top:6443 --token fvu5ei.akiiuhywibwxvdwh --discovery-token-ca-cert-hash sha256:0795075090d621285dbaa4a76b9b320150f5ae3c37f5d7b92fc1c4f8942d9243 # (3) 主节点验证加入的worker工作节点 $kubectl get nodes NAME STATUS ROLES AGE VERSION master-01 Ready master 6d8h v1.18.4 master-02 Ready master 5d20h v1.18.4 master-03 Ready master 5d11h v1.18.4 worker-01 Ready <none> 6d8h v1.18.4 worker-02 Ready <none> 5d21h v1.18.4 worker-03 Ready <none> 11m v1.18.5 #由于kubelet是v1.18.5版本的,在实际生产环境中一般采用稳定版本 $kubectl get pods -A -n kube-system -o wide | grep "worker-03" kube-system calico-node-f2vwk 1/1 Running 0 2m14s 10.20.172.200 worker-03 <none> <none> kube-system kube-proxy-mwml4 1/1 Running 0 2m5s 10.20.172.200 worker-03 <none> <none>

注意事项:

1.当使用 kubeadm pull 相关镜像时 kubeadm 的版本最好和 kubernetes-version=${version} 版本一致,不一致的话有些版本的镜像是 pull 不下来的需要对应版本的 kubernetes 要使用对应版本的镜像才可以。2.一般来说大版本除了k8s自带的命令版本会有变化外,依赖的功能组件通常是不会变化的比如pause:3.2/etcd:3.4.3-0/coredns:1.6.73.成功导入 docker 镜像之后,可以使用 kubeadm init 命令来初始化 master 节点或者初始化work节点;2.离线包安装(sealos)

描述:对于生产环境需要考虑到控制平面的高可用,在这里为了方便部署选用基于 kubeadm 的部署工具 sealos安装,包含安装所需的所有二进制文件,镜像文件,systemd配置,yaml配置与一些简单的启动脚本;对于生产环境无需测试环境当中的一些准备工作,使用 sealos 会自动帮我们完成节点初始化相关工作,只需要在一台 master 节点下载 sealos 二进制文件和离线安装包部署即可。

使用资源:

1.kubernetes离线安装包2.sealos二进制版本基础说明:

1.对于 1.17.0~1.17.5或者1.18.0~1.18.5版本的离线安装包,其中只有 kubenetets 的版本镜像不同其余的插件版本都一致,因此可以选择以 1.17.0/1.18.0 版本为基础制作符合自己所需要的版本。例如:1.18.0基础版本集群部署:

Step1.下载最新版本的sealos二进制文件

wget -c https://sealyun.oss-cn-beijing.aliyuncs.com/latest/sealos -O /usr/bin/ && chmod +x /usr/bin/sealosStep2.sealos参数说明和使用

参数名 | 含义 | 示例 | 是否必须 |

|---|---|---|---|

passwd | 服务器密码 | 123456 | 和私钥二选一 |

master | k8s master节点IP地址 | 192.168.0.2 | 必须 |

node | k8s node节点IP地址 | 192.168.0.3 | 可选 |

pkg-url | 离线资源包地址,支持下载到本地,或者一个远程地址 | /root/kube1.16.0.tar.gz | 必须 |

version | 资源包对应的版本 | v1.16.0 | 必须 |

kubeadm-config | 自定义kubeadm配置文件 | kubeadm.yaml.temp | 可选 |

pk | ssh私钥地址,免密钥时使用 | /root/.ssh/id_rsa | 和passwd二选一 |

user | ssh用户名 | root | 可选 |

interface | 机器网卡名,CNI网卡发现用 | eth.* | 可选 |

network | CNI类型如calico flannel | calico | 可选 |

podcidr | pod网段 | 100.64.0.0/10 | 可选 |

repo | 镜像仓库,离线包通常不用配置,除非你把镜像导入到自己私有仓库了 | k8s.gcr.io | 可选 |

svccidr | clusterip网段 | 10.96.0.0/22 | 可选 |

without-cni | 不装cni插件,为了用户自己装别的CNI | 可选 |

将制作好的离线安装包 scp 到 master 节点的 /opt 目录下。

sealos init --master 10.10.107.109 --master 10.10.107.119 --master 10.10.107.121 --node 10.10.107.123 --node 10.10.107.124 --user root --passwd weiyigeek_test --version v1.17.4 --network calico --pkg-url /opt/kube1.17.4.tar.gz

部署成功后会出现以下提示:

15:37:35 [INFO] [ssh.go:60] [ssh][10.10.107.124:22]: 15:37:35 [INFO] [ssh.go:11] [ssh][10.10.107.124:22]exec cmd is : mkdir -p /etc/kubernetes/manifests 15:37:36 [DEBG] [ssh.go:23] [ssh][10.10.107.124:22]command result is: 15:37:36 [ALRT] [scp.go:156] [ssh][10.10.107.124:22]transfer total size is: 0MB 15:37:36 [INFO] [ssh.go:36] [ssh][10.10.107.124:22]exec cmd is : rm -rf /root/kube 15:37:36 [DEBG] [print.go:20] ==>SendPackage==>KubeadmConfigInstall==>InstallMaster0==>JoinMasters==>JoinNodes 15:37:36 [INFO] [print.go:25] sealos install success.

注意事项:

(1)注意需要修改各个节点的 hostname 不能一致,不然部署的时候会报错duplicate hostnames is not allowed。加载全部内容